DataPipeline 8.1.0 is now available. It adds support for multi-connection upserting to database tables, JDBC read fetch size, and more. Enjoy.

Category Archives: Java

DataPipeline 8.0 Released

Late December we released DataPipeline version 8.0.0 to general availability. This might be our longest list of new features and changes yet. Let’s dive in.

11 Java Data Integration Libraries (2023)

Updated: May 2023

With data being produced from many sources in a variety of formats businesses must have a sane way to gain useful insight. Data integration is the process of transforming data from one or more sources into a form that can be loaded into a target system or used for analysis and business intelligence.

Data integration libraries take some programming burden from the shoulders of developers by abstracting data processing and transformation tasks and allowing the developer to focus on tasks that are directly related to the application logic.

18 ETL Tools for Java Developers (Updated 2023)

Updated: May 2023

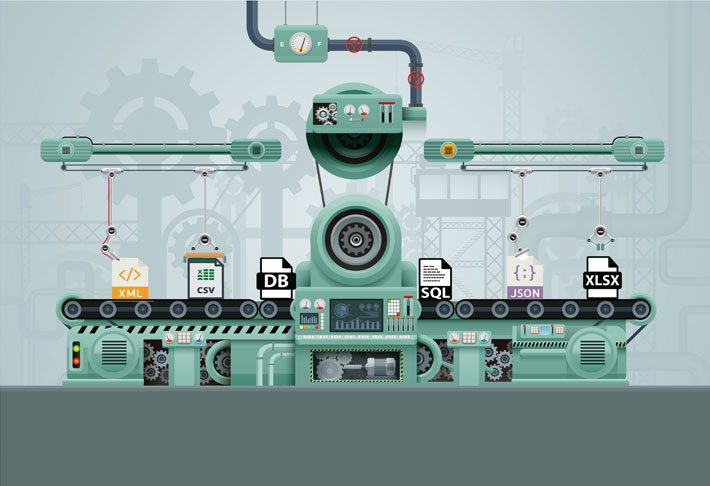

ETL is a process for performing data extraction, transformation and loading. The process extracts data from a variety of sources and formats, transforms it into a standard structure, and loads it into a database, file, web service, or other system for analysis, visualization, machine learning, etc.

ETL tools come in a wide variety of shapes. Some run on your desktop or on-premises servers, while others run as SaaS in the cloud. Some are code-based, built on standard programming languages that many developers already know. Others are built on a custom DSL (domain specific language) in an attempt to be more intentional and require less code. Others still are completely graphical, only offering programming interfaces for complex transformations.

What follows is a list of ETL tools for developers already familiar with Java and the JVM (Java Virtual Machine) to clean, validate, filter, and prepare your data for use.

Spring Batch vs Data Pipeline – ETL Job Example

Updated: July 2021

Most examples of creating a Spring Batch ETL Job require an enormous amount of code for such a routine task. In this blog, I will show you how to accomplish the same task of summarizing a million stock trades to find the open, close, high, and low prices for each symbol using our Data Pipeline framework.

How to speed up JDBC inserts?

Updated: May 2023

When trying to assess how knowledgeable a developer is in general and in JDBC in particular, here’s a question I like to ask: how would you speed up inserts when using JDBC?

Here are some options to consider if you ever need to insert data quickly into an SQL database.

Data Pipeline v4.1 Adds MongoDB Support

We’re excited to introduce Data Pipeline version 4.1, the second update on our 2016 roadmap.

This release features MongoDB integration, expression language additions, and improved transformations and joins. We’ve also thrown in a ton of examples for all the new 4.1 and 4.0 features. Enjoy. Continue reading

How To Aggregate Twitter Searches Without A Database

One feature of Data Pipeline is its ability to aggregate data without a database. This feature allows you to apply SQL “group by” operations to JSON, CSV, XML, Java beans, and other formats on-the-fly — in real-time. This quick tutorial will show you how to use the GroupByReader class to aggregate Twitter search results.

Data Pipeline 3.1 Now Available

Data Pipeline 3.1 is now available for download. This is a milestone release that adds native support for hierarchical data (nested records and multidimensional arrays).

How to convert XML to Excel (2023)

Data Pipeline makes it easy to read, transform, and write XML and Excel files. This post shows you how you too can load data from an on-disk XML file, apply transformations on the fly, and save the result to an Excel file.